To conclude, I feel as though my Interactive installation has taught me a lot in the field of design iterations. I was constantly required to improve and and develop my idea in order to achieve my wanted outcome through prototyping and research.

Music embodied Cognition was recognised by at least one person who noticed his feeling of embodiment with the piece, while others where experiencing embodiment through laughter and dance without even realising.

I also feel as if an Augmented Reality was achieved as the users were able to see themselves through a computer generated version, that also inspired laughter, dance and a positive mood.

My music choice also seemed to be effective, as everyone interacted through dance to some extent and one user even commented saying “Love the track”

Although I am happy with the outcome, this is still ultimately a prototype that can further be developed as people mentioned that it would be more vibrant and entertaining with the addition of colour and mabye more interactive graphics, while a friend of mine also commented that this installation could have potential in clubs and bars.

I feel like processing has taught me much about programming, even though I have only really scratched the surface.

Below here is my final code.

import SimpleOpenNI.*;

import ddf.minim.*;

Minim minim;

AudioPlayer player;

SimpleOpenNI context;

PImage img;

void setup(){

size(640, 480);

// we pass this to Minim so that it can load files from the data directory

minim = new Minim(this);

player = minim.loadFile(“Vanross_-_Horizon_Foundry_Louder_Master.mp3”);

player.play();

context = new SimpleOpenNI(this);

context.enableDepth();

context.enableUser();

context.setMirror(true);

img=createImage(640,480,RGB);

img.loadPixels();

}

void draw(){

background(255);

context.update();

PImage depthImage=context.depthImage();

depthImage.loadPixels();

int[] upix=context.userMap();

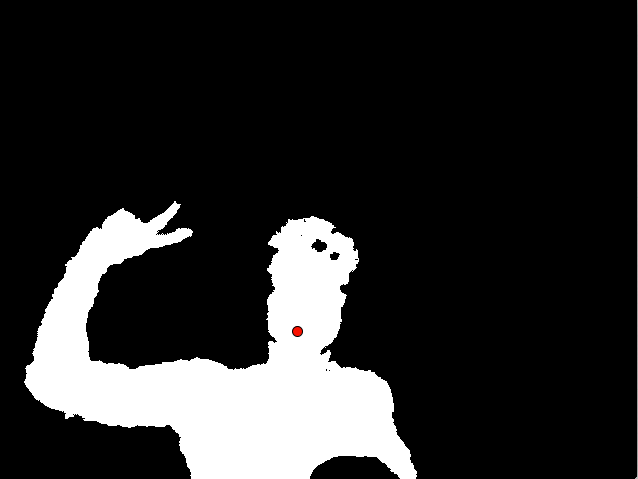

for(int i=0; i < upix.length; i++){

if(upix[i] > 0){

img.pixels[i]=color(255,255,255);

}else{

}

img.pixels[i]=color(0);//depthImage.pixels[i];

}

}

img.updatePixels();

image(img,0,0);

int[] users=context.getUsers();

ellipseMode(CENTER);

for(int i=0; i < users.length; i++){

int uid=users[i];

PVector realCoM=new PVector();

context.getCoM(uid,realCoM);

PVector projCoM=new PVector();

context.convertRealWorldToProjective(realCoM, projCoM);

fill(255,0,0);

ellipse(projCoM.x,projCoM.y,10,10);

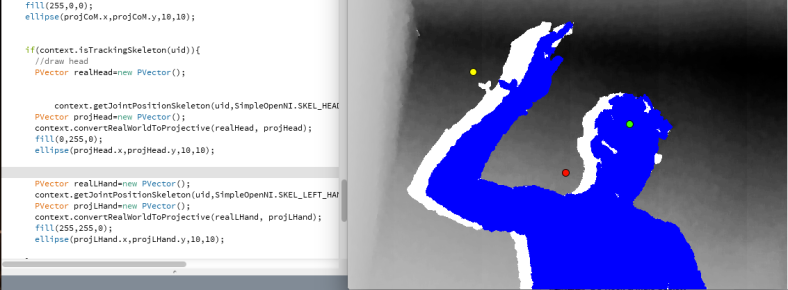

if(context.isTrackingSkeleton(uid)){

//draw head

PVector realHead=new PVector();

/

context.getJointPositionSkeleton(uid,SimpleOpenNI.SKEL_HEAD,realHead);

PVector projHead=new PVector();

context.convertRealWorldToProjective(realHead, projHead);

fill(0,255,0);

ellipse(projHead.x,projHead.y,10,10);

PVector realLHand=new PVector();

context.getJointPositionSkeleton(uid,SimpleOpenNI.SKEL_LEFT_HAND,realLHand);

PVector projLHand=new PVector();

context.convertRealWorldToProjective(realLHand, projLHand);

fill(255,255,0);

ellipse(projLHand.x,projLHand.y,10,10);

float y1, y2, yA;

yA = height * 0.5;

y1 = height * 0.25;

for(int g = 0; g < player.bufferSize() – 1; g++)

{

float x1 = map( g, 0, player.bufferSize(), 0, width );

float x2 = map( g+1, 0, player.bufferSize(), 0, width );

line( x1, y1 + player.left.get(g)*yA, x2, y1 + player.left.get(g+1)*yA );

line( x1, 150 + player.right.get(g)*50, x2, 150 + player.right.get(g+1)*50 );

}

}

}

}

Due to difficulty with copyright, I contacted one of my close friend’s who is a popular DJ under the alias of Vanross in Portsmouth who has granted me permission to use his track ‘Horizon’.

Due to difficulty with copyright, I contacted one of my close friend’s who is a popular DJ under the alias of Vanross in Portsmouth who has granted me permission to use his track ‘Horizon’.